Multi Model Advisor

by YuChenSSR

Provides diverse AI perspectives by querying multiple Ollama models and synthesizing their responses into a single answer.

Multi Model Advisor Overview

What is Multi Model Advisor about?

Multi Model Advisor creates a "council of advisors" by sending the same question to several locally‑hosted Ollama models, each with its own persona, and then combines the individual replies into a comprehensive response.

How to use Multi Model Advisor?

- Install – either run

npx -y @smithery/cli install @YuChenSSR/multi-ai-advisor-mcp --client claudeor clone the repo, runnpm installandnpm run build. - Configure – create a

.envfile withOLLAMA_API_URL,DEFAULT_MODELS, and the system prompts for each model. - Connect to Claude – add an entry to

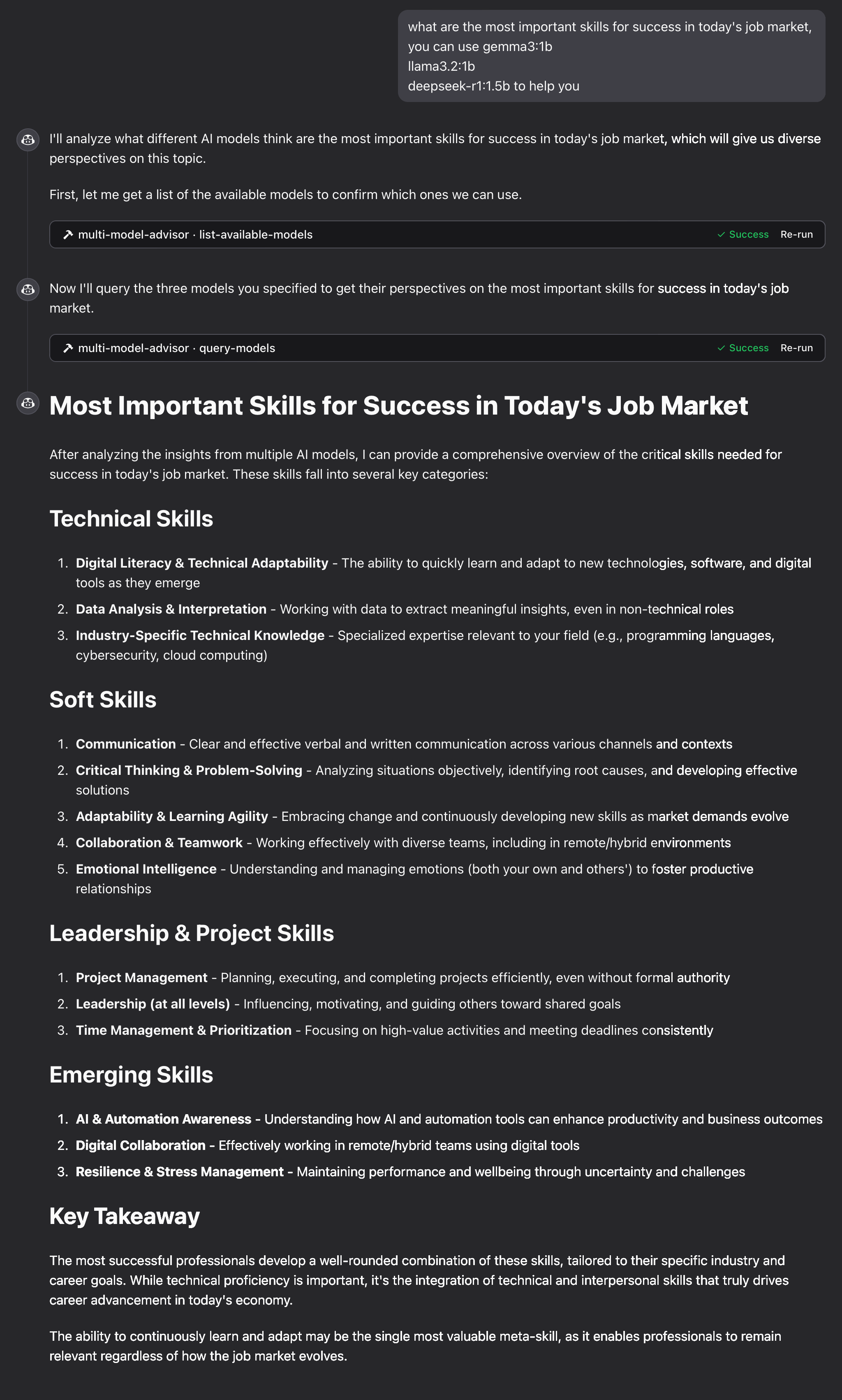

claude_desktop_config.jsonpointing tobuild/index.js. - Ask a question – in Claude, reference the advisor (e.g., "what are the most important skills for success in today's job market, you can use gemma3:1b, llama3.2:1b, deepseek-r1:1.5b to help you"). Claude will invoke the MCP tools, the server queries the models, and Claude synthesizes the combined answer.

Key Features

- Query multiple Ollama models with a single prompt

- Assign distinct roles/personas via custom system prompts

- List all Ollama models installed on the host

- Environment‑variable driven configuration (server name, version, debug mode, API URL, default model list)

- Seamless integration with Claude for Desktop

- Simple installation via Smithery or manual

npmworkflow

Use Cases

- Decision‑making: Gather technical, ethical, and business viewpoints before committing to a strategy.

- Creative brainstorming: Combine imaginative, analytical, and empathetic suggestions for design or content ideas.

- Learning & tutoring: Present explanations from different pedagogical styles to suit varied learner preferences.

- Risk assessment: Contrast cautious and aggressive advice to evaluate potential outcomes.

FAQ

Q: Do I need an internet connection? A: No. All models run locally via Ollama; only the local Ollama HTTP API is required.

Q: How many models can I query simultaneously?

A: Any number listed in DEFAULT_MODELS. Performance depends on CPU/RAM; smaller models are recommended for limited resources.

Q: Can I add my own Ollama models?

A: Yes. Pull the model with ollama pull <model-name> and add it to DEFAULT_MODELS or reference it directly in prompts.

Q: What if a model fails to respond? A: The server returns the responses it received; missing models are reported in the combined output.

Q: How do I change a model’s persona?

A: Edit the corresponding *_SYSTEM_PROMPT variable in .env and restart the server.

Multi Model Advisor's README

Multi-Model Advisor

(锵锵四人行)

A Model Context Protocol (MCP) server that queries multiple Ollama models and combines their responses, providing diverse AI perspectives on a single question. This creates a "council of advisors" approach where Claude can synthesize multiple viewpoints alongside its own to provide more comprehensive answers.

Features

- Query multiple Ollama models with a single question

- Assign different roles/personas to each model

- View all available Ollama models on your system

- Customize system prompts for each model

- Configure via environment variables

- Integrate seamlessly with Claude for Desktop

Prerequisites

- Node.js 16.x or higher

- Ollama installed and running (see Ollama installation)

- Claude for Desktop (for the complete advisory experience)

Installation

Installing via Smithery

To install multi-ai-advisor-mcp for Claude Desktop automatically via Smithery:

npx -y @smithery/cli install @YuChenSSR/multi-ai-advisor-mcp --client claude

Manual Installation

-

Clone this repository:

git clone https://github.com/YuChenSSR/multi-ai-advisor-mcp.git cd multi-ai-advisor-mcp -

Install dependencies:

npm install -

Build the project:

npm run build -

Install required Ollama models:

ollama pull gemma3:1b ollama pull llama3.2:1b ollama pull deepseek-r1:1.5b

Configuration

Create a .env file in the project root with your desired configuration:

# Server configuration

SERVER_NAME=multi-model-advisor

SERVER_VERSION=1.0.0

DEBUG=true

# Ollama configuration

OLLAMA_API_URL=http://localhost:11434

DEFAULT_MODELS=gemma3:1b,llama3.2:1b,deepseek-r1:1.5b

# System prompts for each model

GEMMA_SYSTEM_PROMPT=You are a creative and innovative AI assistant. Think outside the box and offer novel perspectives.

LLAMA_SYSTEM_PROMPT=You are a supportive and empathetic AI assistant focused on human well-being. Provide considerate and balanced advice.

DEEPSEEK_SYSTEM_PROMPT=You are a logical and analytical AI assistant. Think step-by-step and explain your reasoning clearly.

Connect to Claude for Desktop

-

Locate your Claude for Desktop configuration file:

- MacOS:

~/Library/Application Support/Claude/claude_desktop_config.json - Windows:

%APPDATA%\Claude\claude_desktop_config.json

- MacOS:

-

Edit the file to add the Multi-Model Advisor MCP server:

{

"mcpServers": {

"multi-model-advisor": {

"command": "node",

"args": ["/absolute/path/to/multi-ai-advisor-mcp/build/index.js"]

}

}

}

-

Replace

/absolute/path/to/with the actual path to your project directory -

Restart Claude for Desktop

Usage

Once connected to Claude for Desktop, you can use the Multi-Model Advisor in several ways:

List Available Models

You can see all available models on your system:

Show me which Ollama models are available on my system

This will display all installed Ollama models and indicate which ones are configured as defaults.

Basic Usage

Simply ask Claude to use the multi-model advisor:

what are the most important skills for success in today's job market,

you can use gemma3:1b, llama3.2:1b, deepseek-r1:1.5b to help you

Claude will query all default models and provide a synthesized response based on their different perspectives.

How It Works

-

The MCP server exposes two tools:

list-available-models: Shows all Ollama models on your systemquery-models: Queries multiple models with a question

-

When you ask Claude a question referring to the multi-model advisor:

- Claude decides to use the

query-modelstool - The server sends your question to multiple Ollama models

- Each model responds with its perspective

- Claude receives all responses and synthesizes a comprehensive answer

- Claude decides to use the

-

Each model can have a different "persona" or role assigned, encouraging diverse perspectives.

Troubleshooting

Ollama Connection Issues

If the server can't connect to Ollama:

- Ensure Ollama is running (

ollama serve) - Check that the OLLAMA_API_URL is correct in your .env file

- Try accessing http://localhost:11434 in your browser to verify Ollama is responding

Model Not Found

If a model is reported as unavailable:

- Check that you've pulled the model using

ollama pull <model-name> - Verify the exact model name using

ollama list - Use the

list-available-modelstool to see all available models

Claude Not Showing MCP Tools

If the tools don't appear in Claude:

- Ensure you've restarted Claude after updating the configuration

- Check the absolute path in claude_desktop_config.json is correct

- Look at Claude's logs for error messages

RAM is not enough

Some managers' AI models may have chosen larger models, but there is not enough memory to run them. You can try specifying a smaller model (see the Basic Usage) or upgrading the memory.

License

MIT License

For more details, please see the LICENSE file in this project repository

Contributing

Contributions are welcome! Please feel free to submit a Pull Request.

Multi Model Advisor Reviews

Login Required

Please log in to share your review and rating for this MCP.

Related MCP Servers

Discover more MCP servers with similar functionality and use cases

LibreChat

Clientby danny-avila

Provides a customizable ChatGPT‑like web UI that integrates dozens of AI models, agents, code execution, image generation, web search, speech capabilities, and secure multi‑user authentication, all open‑source and ready for self‑hosting.

Blender

by ahujasid

BlenderMCP integrates Blender with Claude AI via the Model Context Protocol (MCP), enabling AI-driven 3D scene creation, modeling, and manipulation. This project allows users to control Blender directly through natural language prompts, streamlining the 3D design workflow.

Pydantic AI

by pydantic

Enables building production‑grade generative AI applications using Pydantic validation, offering a FastAPI‑like developer experience.

Figma

by GLips

Figma-Context-MCP is a Model Context Protocol (MCP) server that provides Figma layout information to AI coding agents. It bridges design and development by enabling AI tools to directly access and interpret Figma design data for more accurate and efficient code generation.

Mcp Use

Clientby mcp-use

Easily create and interact with MCP servers using custom agents, supporting any LLM with tool calling and offering multi‑server, sandboxed, and streaming capabilities.

Talk To Figma

by sonnylazuardi

This project implements a Model Context Protocol (MCP) integration between Cursor AI and Figma, allowing Cursor to communicate with Figma for reading designs and modifying them programmatically.

WhatsApp MCP Server

by lharries

WhatsApp MCP Server is a Model Context Protocol (MCP) server for WhatsApp that allows users to search, read, and send WhatsApp messages (including media) through AI models like Claude. It connects directly to your personal WhatsApp account via the WhatsApp web multi-device API and stores messages locally in a SQLite database.

GitMCP

by idosal

GitMCP is a free, open-source remote Model Context Protocol (MCP) server that transforms any GitHub project into a documentation hub, enabling AI tools to access up-to-date documentation and code directly from the source to eliminate "code hallucinations."

Discord

Officialby Klavis-AI

Klavis AI provides open-source Multi-platform Control Protocol (MCP) integrations and a hosted API for AI applications. It simplifies connecting AI to various third-party services by managing secure MCP servers and authentication.